The following data methods were selected to provide both qualitative and quantitative data. Because the data collection came from multiple methods it addressed diverse learning needs.

#1 Likert Scale Survey

|

A 9 question Likert Scale survey was used to measure students confidence in their own ability to solve word problems. This was administered the class period following each of the three unit tests: exponents, square roots, and equations. Students rated each statement as “strongly agree, agree, neutral, disagree, or strongly disagree”. To see the entire survey please click on the "SURVEY" button below.

|

The Likert scale measured changes in a students’ confidence level. Sometimes the problem is not that a student doesn’t know what to do, but that they think they do not know what to do. It was the goal that after repeatedly using these strategies students would not panic when they saw a word problem. Ideally students’ responses to the survey would become more positive each time.

|

Analysis

Question #4

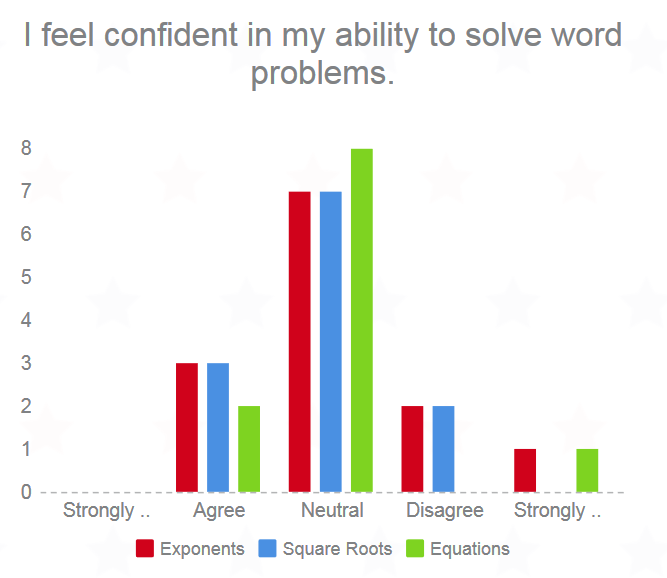

The surveys gave me some good information about how my students felt. Because I was the only one seeing their responses I think they felt more comfortable answering the questions truthfully. One of the statements I was most interested in was "I feel confident in my ability to solve word problems." The bar graph on the right shows the students responses. As time went on their confidence level did not increase but it also did not really decrease. I was not surprised to see this as the way students talked about word problems did not seem to be more positive as time went on.

|

Likert Scale Question #8

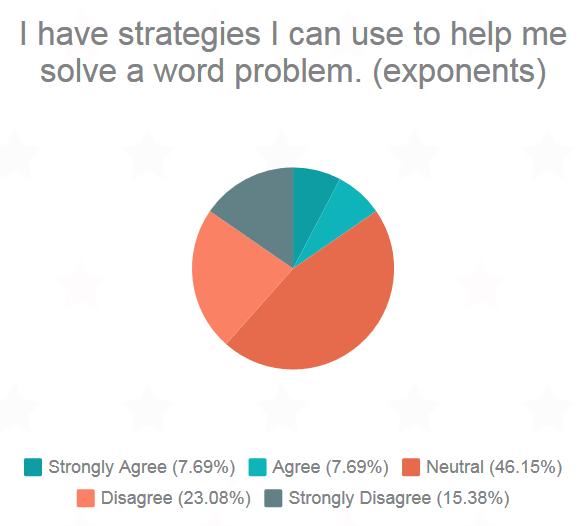

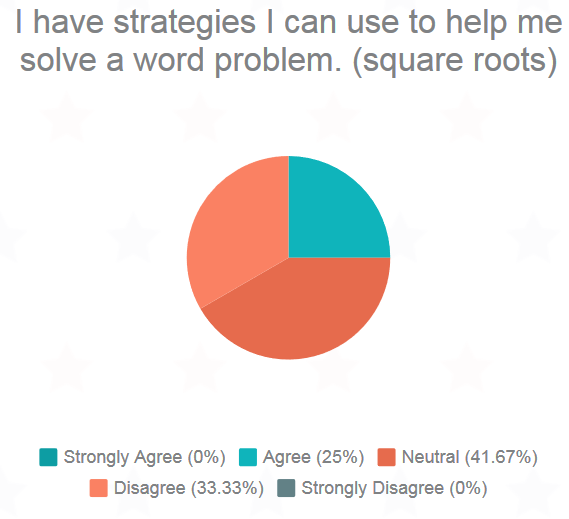

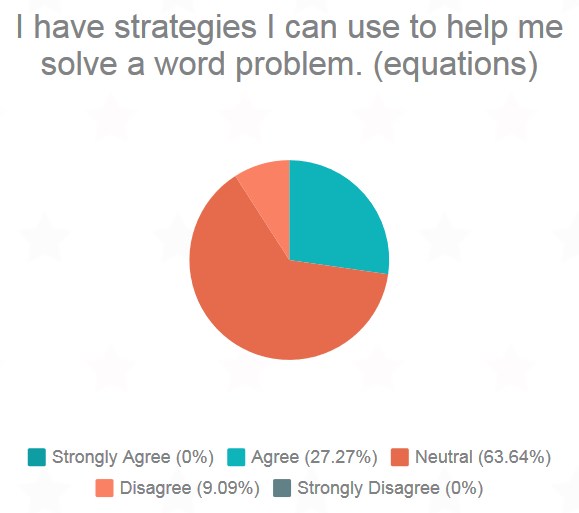

Because students' confidence did not really change I decided to look at question #8 which stated "I have strategies I can use to help me solve a word problem." Pie charts of students' responses after each test can be seen below.

Between Test 1 (Equations) and Test 2 (Square roots) the combined percentage of students who strongly agreed or agreed rose from 15.38% to 25% while the combined percentage of students who strongly disagreed or disagreed dropped from 38.46% to 33.33%. Although students still did not feel entirely confident in their ability to solve word problems they still felt they had learned some strategies they could put to use.

It was interesting to see that after Test 3 (Equations) the strongly agree/agree responses rose again slightly to 27.27%. The strongly disagree/disagree responses dropped all the way to 9.09%. However, far more students said they felt neutral about word problems. I think more students felt neutral about word problems at this point because so few students tried the word problems on this test. They could not have an opinion on something they did not do.

It was interesting to see that after Test 3 (Equations) the strongly agree/agree responses rose again slightly to 27.27%. The strongly disagree/disagree responses dropped all the way to 9.09%. However, far more students said they felt neutral about word problems. I think more students felt neutral about word problems at this point because so few students tried the word problems on this test. They could not have an opinion on something they did not do.

#2 Unit Test Word Problems

The word problems on each of the three unit tests were examined. Of interest were the number of problems students attempted and the accuracy of the solutions. These unit tests are prepared by the district. Two-sample t-tests were conducted between the first and second unit tests, the second and third unit tests, and the first and third unit tests to see if the difference in problems completed is significant. The same will be done to check for a significant difference in the number of accurate problems. The unit tests were used as the pre and post tests because they had word problems over material the students have recently learned. It did not make sense to give the students pre and post tests over a random assortment of word problems. This could have confused them and led to inaccurate results. The unit tests fit into the curriculum and would have been administered anyway, so the students were not doing extra work for this action research.

Analysis

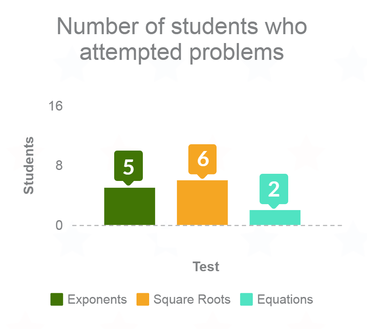

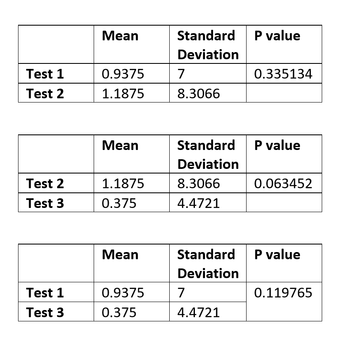

Problems AttemptedThe third test, which was over equations, had the lowest number of students attempt even one word problem. This is likely due to the fact that students did not have a strong understanding of the material and therefore did not attempt the word problems. Although the mean number of problems attempted (out of 4 total problems) increased from Test 1 to Test 2, the p value from the t-test was 0.335134, which is greater than 0.05 and therefore not significant. The mean number of problems students attempted on Test 3 decreased from Test 2 to Test 3 but again was not significant as the p value was 0.063452. From Test 1 to Test 3 the the mean number of problems attempted decreased. This number was not significant because the p value was 0.119765. I noticed that if a student tried a word problem they were usually correct. It seemed that if students did not know what to do they just left the problem blank. It was as if they felt it was better not to try at all, rather than try and fail. |

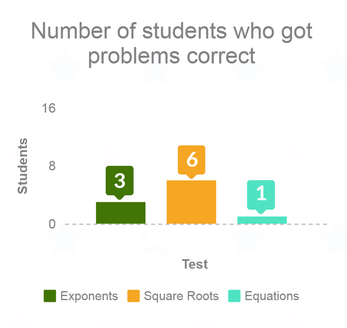

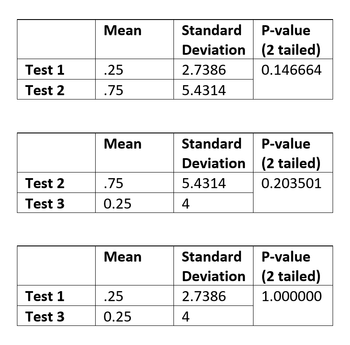

Problems CorrectThe three unit tests were over three different topics: square roots, exponents, and equations. The varying number of students that got problems correct led me to believe that perhaps students did not have a strong understanding of the material they were supposed to be using to solve their word problems. The students struggled with even the level two questions on the equations test. It is difficult to make conclusions about their understanding of word problems on this test if they did not have a strong understanding of one and two-step equations. Although the mean number of word problems correct increased from Test 1 (square roots) to Test 2 (exponents) the difference was not significant. The p value for this t test was 0.146664. For the difference to be significant the value would have to be less than 0.5. The number of word problems correctly actually decreased from Test 2 (exponents) to Test 3 (equations). I did not find this surprising as I felt my students struggled to solve equations even before they were writing word problems. However, the difference was not significant as the p value was 0.203501. Finally, the mean number of word problems correct neither increased nor decreased from Test 1 to Test 3. |

#3 Anecdotal Notes

Anecdotal notes were taken by the teacher. These notes included comments made by students as they worked on word problems and which strategy students used most frequently.

Analysis

Strategies Most Commonly UsedIt was interesting to see which strategy students picked. During both the exponents test and square roots test students tended to draw schematic diagrams. During these chapters we solved a lot of problems that required students to find area or perimeter of rectangles and triangles. They immediately drew the appropriate shape and labeled their diagrams. These problems usually had less information for students to sort through. The problems almost always had the word "area", "perimeter", or "length" in the questions so students did not have to guess what they were being asked to do. Equations are more difficult to set up and solve than area or perimeter problems. During the equations test students usually identified the parts of the question that gave clues about how to write the equation. However, no student drew diagrams for this test's word problems. It could be that they were not as successful because they did not draw a picture. It is also possible that they just did not understand equations as well as they understood square roots and exponents. According to the Likert scale survey data students felt they had the tools to solve word problems but they were not confident in solving problems. This was evident on the equations test. Students were able to cross out unnecessary information, underline mathematical words, and circle numerical information. However they did not feel comfortable taking the next steps. |

Post Research ConversationsAfter looking at the data from unit tests I was surprised to see that over time there did not seem to be a positive change in the number of word problems students got correct or even completed. After Test 3 (exponents) I decided to ask a few students what they thought.

Student 1

Question: Why didn't you try the word problems? "I don't like them, they're not needed. There's all these other questions plus word problems and it's hard for people to figure out. "It's easier when it's just an equation. I know it's real world math but I still don't like them." Student 2Question: What would help you solve word problems? "If someone helped me set up the equation. I know how to solve equations but I don't know how to write them. There's too many words." Student 3Question: Why didn't you try the word problems? "I just didn't really understand equations so that's why I didn't try the word problems." . It seems that students don't answer word problems for a combination of the reasons I already suspected. I encouraged the students who didn't understand the material to come in for extra help. |